Getting value from large datasets in a short amount of time

June 1st, 2022One week, millions of rows, six key questions. We were asked to perform a short data analysis for NMBS, the Belgian railway company. Over one week, we investigated two related datasets (several million rows each) to help them understand their data and identify missing observations. The analysis had to be reproducible and presentable by the client afterward. Here are our tips for investigating large datasets when time is limited.

Set up your workflow correctly from the start

Before exploring data, ask: how much data do I have? How will I present results? Does the analysis need to be reproducible? For NMBS, we set up a pipeline on day one: data from Azure Blob Storage to Databricks, output back to Blob Storage, with Azure Data Factory triggering on new files. A Power BI dashboard sat on top for both exploration and final presentation. This ensured reproducibility, boosted productivity, and let the client run future analyses by simply uploading new files.

Focus on specific examples

When faced with large volumes, focus on specific examples rather than just summaries. Simply saying '15% of data is missing' isn't very helpful. By investigating examples of both problematic and non-problematic observations and discussing them with the business, we discovered that many initially flagged cases were 'missing but non-problematic' — which were then excluded from the final analysis.

Communication, specific goals, and documentation

With limited time, daily calls and close contact with the client prevent wasted hours on low-priority work. We agreed on six specific questions upfront and presented conclusions at the end of the week. We spent our last half-day on documentation alone — ten percent of total worktime, but without it the entire analysis would have been practically worthless for future use.

Explore more

Data-driven companies: when being good enough is no longer sufficient

Data-driven companies outperform their competitors, yet 9 out of 10 firms point to cultural challenges as the biggest bottleneck. Three practical recommendations for becoming data-driven.

Cognitive Search: unlocking value from unstructured data

How Tafuta, Arinti's cognitive search platform, helps organisations retrieve value from unstructured data using NLP, speech-to-text, and image processing.

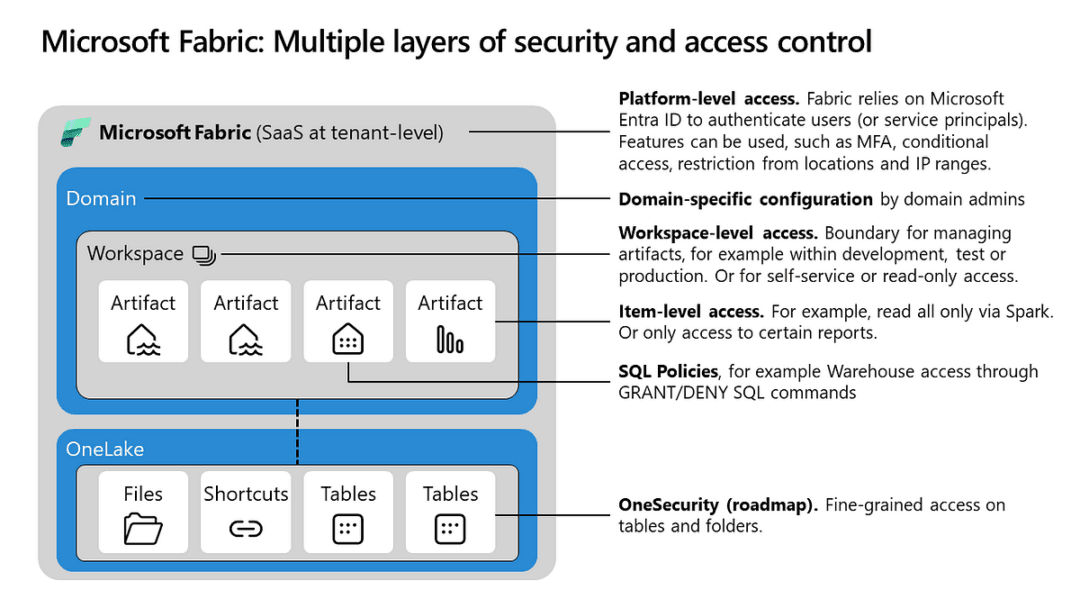

Redefining data management for organisations with Microsoft Fabric

Microsoft Fabric unifies diverse data management tools into a cohesive SaaS platform. This article explores its architecture and how they empower organisations to optimise data operations.

Related Cases

Forecasting sales across 14 product categories

Sales forecasting for Unilever Belgium — predicting daily and monthly sales volumes across 14 product categories using category-specific machine learning models, with Power BI dashboards for the commercial team.

Governed Databricks data platform for global operations

Volvo Logistics distributes over 700,000 spare parts worldwide. We built a governed Databricks data platform that unified operational data from six global sites — delivering up to 40% efficiency gains, 99% reduction in pipeline latency, and self-service analytics for business users.