Governed Databricks data platform for global operations

Volvo Logistics runs the spare parts supply chain for Volvo's aftermarket worldwide — distributing over 700,000 spare parts across six global sites. The data behind those operations was spread across mainframes, SAP, streaming feeds, and legacy systems, with no shared definitions and no unified governance. We built SMLCloud — a governed Azure Databricks platform that unified all operational, warehouse, and supply chain data into analytics-ready data products.

Aftermarket logistics at global scale

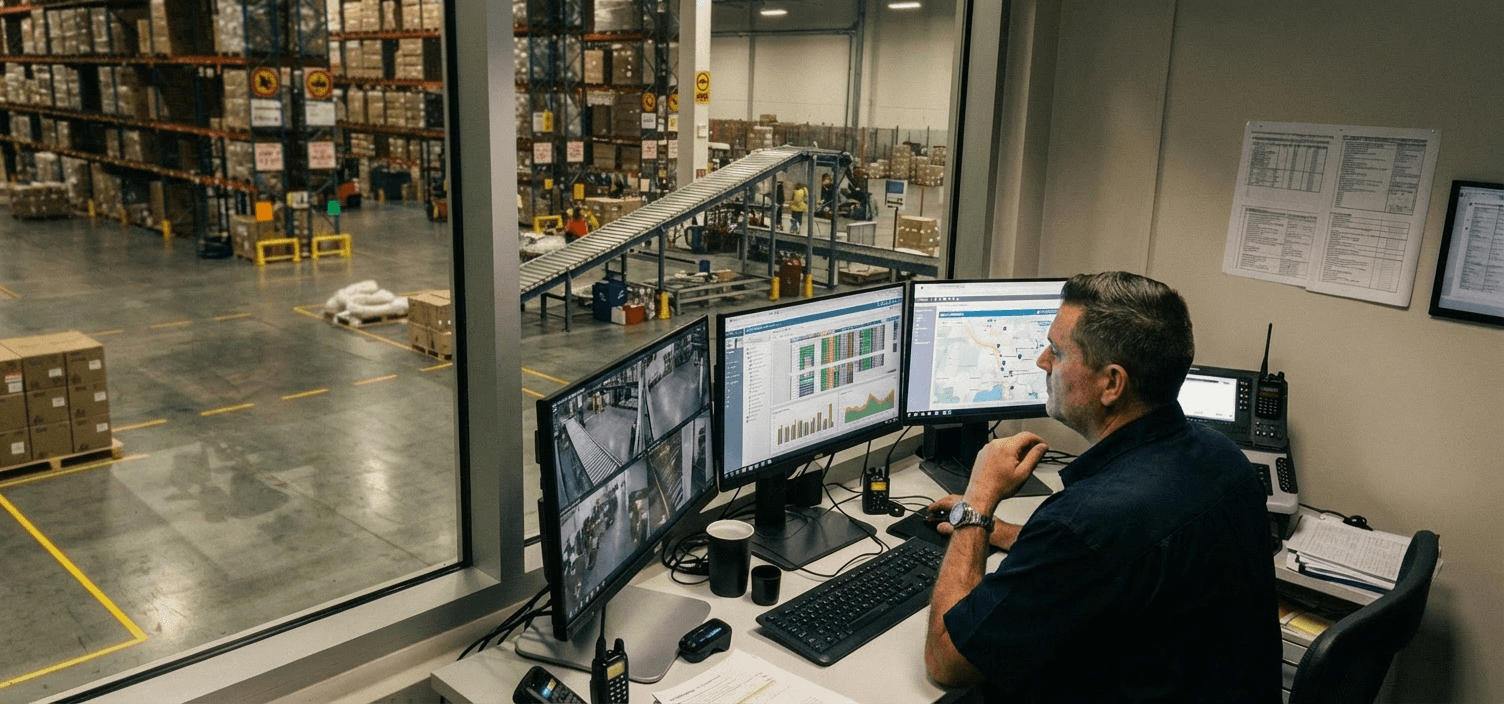

The right part needs to reach the right workshop, fast. Volvo Logistics' SML division is responsible for getting spare parts from suppliers to dealers and workshops worldwide. It is a high-volume, time-sensitive operation where planning, warehousing, and transport decisions all depend on accurate, current data. Before this project, that data was spread across dozens of source systems. Mainframes held operational records. SAP held transactional data. Middleware tools like IBM CDC and IBM MQ captured changes from source systems. Azure Event Hub carried live signals from equipment and processes. Each domain — Warehousing, Transport, Planning — maintained its own extracts and its own version of the numbers. Scheduled pipelines ran alongside live data feeds, uncoordinated and often duplicating effort. Governance had been applied inconsistently. When business users needed a new report, the request went into an IT queue and could take weeks to surface. Some requests did not surface at all.

The structural gap

The visible problem was fragmented reporting. The underlying issue: Volvo Logistics had no single, governed data layer that could serve all domains with consistent definitions and controlled access. Years of mergers and acquisitions had left systems that had never been designed to talk to each other. Inventory planning occurred warehouse by warehouse, with limited visibility into what resources could be shared. What was needed was a platform that could ingest from every source system, standardise definitions across domains, enforce governance at every layer, and still move fast enough for operational decision-making. We scoped and built that platform together with the SML team.

SMLCloud: the Databricks data platform

SMLCloud is a large-scale Azure Databricks platform that unifies all of Volvo Logistics' operational, warehouse, and supply chain data into governed, analytics-ready data products. The architecture follows a medallion pattern — a layered approach where data gets progressively cleaner as it moves from raw ingestion to business-ready outputs.

Bronze: raw data, preserved as-is

Raw data from every source system lands in the Bronze layer unmodified: mainframe dumps, SAP extracts, change feeds from middleware, live streams from Event Hub. Nothing is filtered or altered. This creates an auditable record of everything entering the platform, regardless of format or origin.

Silver: standardised and enriched

A single ingestion framework handles the transformation work across all source systems. It is driven by configuration rather than custom code: adapting when data structures change, enforcing data types and validation rules, and tracking how records evolve over time. Mapping tables standardise definitions so that a shipment means the same thing whether it originates in Warehousing or Transport.

Gold: business-ready data products

Curated datasets serve Power BI dashboards, operational applications, and Databricks' AI/BI tools. Each dataset is organised per domain and governed through Unity Catalog, so Warehousing sees its data products and Transport sees its own. Access is controlled down to the level of individual dataset structures.

Governance built into every pipeline

Unity Catalog manages every dataset, every report view, and every access permission on the platform. We migrated more than 30 domain schemas from the previous catalogue system (Hive Metastore), standardised access controls, and ensured that all automated processes run under controlled, auditable identities rather than personal accounts. Access is role-based: a transport analyst sees transport data, not warehouse financials. Data lineage is tracked end to end — any number can be traced back to its source. Governance here is not a policy document. It is built into the platform's daily operation: every pipeline, every deployment, every access request runs through it.

From batch-only to near-real-time

The original data flows ran on a schedule: nightly jobs that processed the previous day's records. For a logistics operation where decisions depend on what is happening now, that one-day lag was a constraint the business worked around rather than accepted.

Streaming pipelines

We introduced a real-time track alongside the scheduled pipelines. Machines send status updates via MQTT through Azure Event Grid. Each device authenticates with its own certificate, so the data stream is both secure and traceable. Spark Structured Streaming transforms the incoming signals on arrival. A lightweight database holds the latest reading per metric, and an automated function pushes it to Salesforce for rental staff worldwide. Field engineers and rental managers can now check live machine status from their phones.

Lakeflow migration

The platform is migrating its scheduled pipelines to Lakeflow Declarative Pipelines, a newer Databricks framework that removes the need for intermediate storage between processing steps — resulting in up to 40% efficiency gains on routine data tasks and a 99% reduction in pipeline latency.

“With just a simple click, we can transition our metadata scheduling from triggered to continuous and back again, without the need to dive into complex code. Spark Declarative Pipelines can handle schema changes almost automatically. It's a nice feature.”

Data without an IT ticket

We built Databricks' AI/BI Genie Spaces as the interaction layer for business users. It lets people ask questions about their data in plain language and get answers drawn from the curated Gold datasets. Power BI dashboards serve structured reporting needs. Genie Spaces serves the ad hoc ones: the questions that come up in a meeting and used to require a data analyst to answer. For a growing share of the organisation's data needs, the queue between question and answer has been cut from weeks to seconds.

Delivery at scale across six sites

With hundreds of automated data jobs across dozens of domain packages, the platform needed rigorous deployment discipline. We industrialised delivery using Databricks Asset Bundles and Azure DevOps CI/CD. Every change follows a controlled path: development, quality assurance, pre-production, production. Only the packages that actually changed get deployed. Automated tests verify logic before anything touches a live environment. Code-format checks enforce consistency across the engineering team. The platform runs across six global sites, processes data from over 200 source tables, and supports the daily operations of a logistics network that serves Volvo's aftermarket worldwide. It has been running since May 2022 and continues to expand.

“Today, we get an integrated view of where spare parts are, the value of spare parts across warehouses and the potential costs involved in shipping parts from warehouse to warehouse or dealers.”

What this made possible

Volvo Logistics started this project with a data environment that collected data but could not connect it. Four years in, the platform has evolved from batch-only processing to a hybrid architecture combining batch and real-time pipelines on one governed Databricks platform. The next steps are already in scope: predictive maintenance based on sensor patterns, data-as-a-service offerings where machine insights become a product, and continued migration to Lakeflow Declarative Pipelines for fully real-time ingestion.