From scattered onboarding emails to a single chatbot

March 15th, 2024When new colleagues join Arinti, onboarding information used to be scattered across emails and documents. With Large Language Models now available, we built a chatbot that answers employee questions instantly — powered by our own Notion knowledge base.

How it works — three steps

First, Document Ingestion: we convert all Notion onboarding content into numerical vectors using OpenAI embedding models. The content is split into chunks using LangChain's markdown splitter, then stored in a vector database. Second, Query: the user's question is converted into a vector using the same embedding model, then matched against the vector database via similarity search. The most relevant content chunks are passed along with the question to OpenAI GPT 3.5 Turbo, which formulates an answer based on a structured prompt. Third, Memory: the chatbot tracks conversation history, combining previous messages with new questions into standalone queries.

Automation and deployment

Azure Functions automatically fetch new Notion content on a daily basis, keeping the chatbot's knowledge up to date. The front-end is built with Streamlit and embedded directly into our Notion workspace — so employees access it without switching tools. The same architecture works for any knowledge base: FAQ pages, project documentation, or policy documents. As long as the content is stored somewhere, it can serve as the foundation for a chatbot.

Explore more

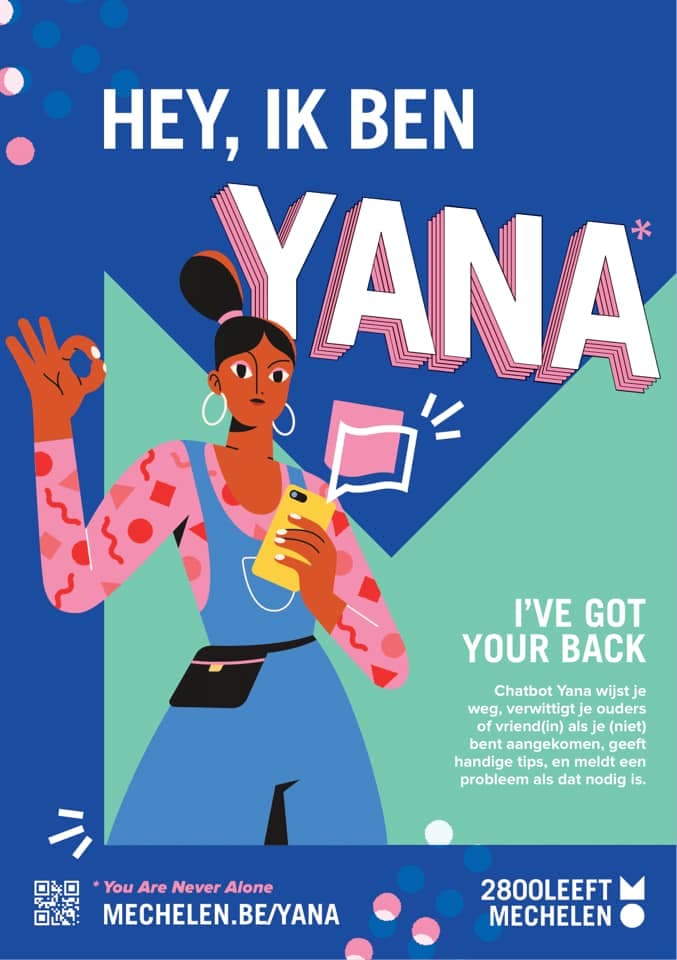

Redeeming the chatbot: more than easy customer interactions

Chatbots are more than marketing gimmicks. From virtual assistants and safety buddies to symptom checkers during COVID-19 — here's an overview of different chatbot types and how we build them.

Chatbot human handover with Microsoft Teams

How Arinti enables seamless handover from chatbot to human agent through Microsoft Teams integration.

3 Different Flavors For Building Chatbots With Microsoft

Microsoft offers three tools for building chatbots: Power Virtual Agent (no-code), Bot Framework Composer (low-code), and Bot Framework SDK (full flexibility). Here's how they compare and who each one is for.

Related Cases

Chatbot Bertje - digital citizen services for the City of Roeselare

Chatbot Bertje answers citizen questions for the City of Roeselare across all municipal domains, 24/7 — built with a database of 1,000 questions, NLP-based training, and automatic routing to the city's complaint handling system. Live since October 2019.

Business process automation for HR services

Chatbot Louise automates end-of-contract procedures at Partena Professional — reducing dismissal advice from 60+ minutes to 15–20 minutes, serving 900 payroll consultants daily, and going from prototype to production in three months.

GenAI-powered virtual assistant for citizen services

AI-powered virtual assistant for citizen services - the first GenAI-powered chatbot on a Flemish municipal website, combining conversational AI with semantic search to answer citizen questions in plain Dutch, directly from the city's own content.

%2520Toerisme%2520Leiestreek%2520vzw.jpg&w=3840&q=75)