Bachelor's thesis: Converting sign language into text using AI

September 1st, 2020Flemish Sign Language (VGT) is the mother tongue of approximately 6,000 Flemish people. Yet it's understood by only a small fraction of the population. This bachelor's thesis investigated whether AI can promote communication between sign language and spoken language by converting gestures into written text via camera.

Technical approach

Since video content recognition wasn't mature enough, the approach splits gesture video into frames and sends selected frames to an image classification API. Five frames per gesture are extracted for optimal performance vs accuracy. The thesis compared three services: Azure Custom Vision, Google AutoML Vision, and Amazon Rekognition Custom Labels.

Training and results

Using Azure Custom Vision, the model was trained with about 50 images per gesture as a starting point. Three factors determine quality: quantity, balance across labels, and variety (backgrounds, lighting, angles). A feedback mechanism lets users flag incorrect translations, enabling continuous improvement. The proof of concept confirmed that image recognition technology has advanced far enough to convert sign language into text. The reverse direction uses the VGT dictionary to display signs for spoken or typed input. Student: Yasmine De Winne — University College Ghent, co-promoted by Wouter Baetens

Explore more

Build your own image dataset with Bing Image Search API

A step-by-step Python guide for building your own image dataset using the Bing Image Search API — from search and download to storing results in Azure Machine Learning Studio.

Internship report: Using AI to personalise medical questionnaires

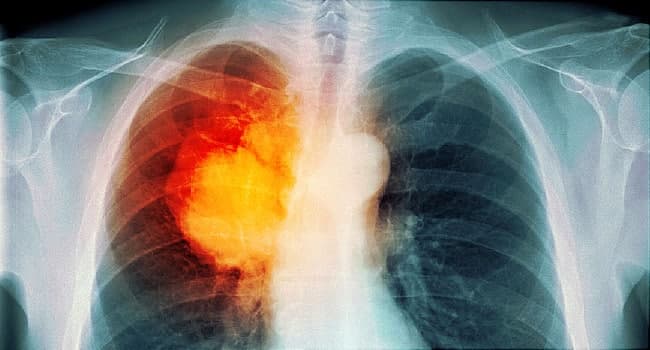

Intern Usman Dankoly researched AI techniques to personalize PRO-CTCAE questionnaires for lung cancer patients — using K-means clustering and personalised recommendation scores to reduce questionnaire burden.

Internship report: K-Means clustering EWS data

Intern Senne built a patient monitoring dashboard with K-Means clustering on Early Warning Score data — categorising patients by health trajectory to support clinical decision-making.